Why AI triage keeps failing in practice. Care work, long considered deeply human, is increasingly intertwined with technology and its promises of efficiency, personalisation and accessibility. In healthcare, these pressures are especially visible in triage, where rising patient volumes and persistent nursing shortages have intensified the demand for faster and more scalable ways of assessing patient needs.

Chris Ferguson is a design leader, professor and applied researcher at the University of Toronto, and the founder and former CEO of the service design studio Bridgeable. His current work focuses on applied research and practice around public service transitions - helping organisations navigate complexity, integrate AI and translate strategy into effective services.

Matt Ratto is Associate Dean, Research and a full Professor in the Faculty of Information at the University of Toronto. His research explores the social production of knowledge in the context of emerging digital technologies and leverages critical theories and perspectives from the humanities and social sciences to develop novel insights and design paradigms.

Triage in nursing is a critical, specialised process of rapidly assessing and prioritising patient concerns to ensure that the most urgent cases receive immediate attention. Beyond prioritisation, triage nurses manage complex, high-acuity patient populations particularly in high-stakes environments such as cancer care, by providing 24/7 symptom support, guiding patients to appropriate care and helping reduce unnecessary emergency department (ED) visits. As workloads increase and staffing gaps persist, triage has become an especially appealing target for AI-enabled tools that promise to extend clinical capacity and streamline decision-making.

Yet, despite this promise, most AI-enabled triage initiatives fail to deliver meaningful or sustainable improvements.

The challenge lies in how technology reshapes the relational and organisational ecology of care. Scholars in science and technology studies (STS) and related fields highlight the ‘socio-technical’ nature of work, emphasising that care work in particular is fundamentally relational, embedded in socio-technical systems where professionals, technologies and institutions co-evolve (Mol, 2008; Suchman, 2007).

Technology is not neutral: it mediates workflows, redistributes responsibility and redefines professional boundaries. AI diagnostics and co-ordination platforms illustrate this duality: they can augment capacity and streamline work, but also risk deskilling labour, increasing workload or diminishing the relational dimensions of care (Greenhalgh et al., 2019; Susskind & Susskind, 2015).

These dynamics create ethical and practical tensions often overlooked in AI projects. Efficiency-driven solutions can inadvertently undermine caregiver autonomy, patient agency or the subtle relational work that sustains quality care. Across healthcare, aged care and social services, technology always reshapes work, producing trade-offs between optimisation, relational labour and equity.

For practitioners, this underscores a critical insight: AI adoption is not a purely technical challenge. It requires attention to how design decisions redistribute effort, influence relationships and surface trade-offs that emerge from the interdependencies of roles, practices and technologies – an understanding essential for realising AI’s intended benefits.

Reframing AI design: From requirements to trade-offs

Most AI projects still follow a familiar path: research, synthesise insights, translate into requirements. This assumes problems are bounded and solutions stable, assumptions that collapse in complex service systems like triage.

AI reshapes how value, authority and responsibility are distributed. Every design choice produces trade-offs: nurses may gain efficiency but lose discretion; patients may receive faster responses at the cost of relational care; organisations may increase capacity while intensifying staff strain. These trade-offs are not just implementation challenges – they are the core design problem, exacerbated by the differing needs and values of differing stakeholders.

As Jon Iwata, former IBM executive and Yale School professor observes, organisations who operate at the intersection of service users, employees, funders, investors and society are constantly navigating “competing interests or often conflicting interests”. The challenge is not whether trade-offs exist, but whether they lead to informed choices. Yet, as Iwata notes, “making these kinds of trade-offs doesn’t come naturally”.

The shift also redefines the role of service designers. At Volkswagen Group, Linus Schaaf describes designers moving beyond simply providing requirements toward actively defining trade-offs between stakeholders. Designers, he argues, will inevitably be “compromising between tensions” – but crucially, “the designer will define the compromise”. Reframing design from “What should the system do?” to “What trade-offs are we making?” surfaces these value judgments and enables grounded, honest conversations.

Context: Designing under pressure at Princess Margaret Cancer Hospital

This project was a collaboration between members of Cancer Digital Intelligence, a design and technology team operating inside Princess Margaret Cancer Centre (PMCH) and applied researchers and graduate students from the University of Toronto. The exploration of AI triage formed part of a broader change initiative that involved redistributing labour among staff, redefining nursing roles, and adapting workspaces and tools. AI triage was introduced in a time when PMCH operated under high pressure and with overlapping and often conflicting priorities.

From executive leadership, the mandate was clear: respond to rising cancer rates and more patients living longer after cancer treatment while operating under financial constraint. Leaders sought to address nursing shortages, explore meaningful AI adoption, optimise workflows with digital tools and make use of newly acquired facilities by relocating triage nurses outside of busy clinics. These ambitions emphasised efficiency, capacity and measurable performance.

At the same time, nursing staff were operating under sustained strain. Chronic understaffing, exacerbated by the Covid-19 pandemic, had intensified workload and burnout. Nurses described high stress, accelerated onboarding of new staff and scepticism toward yet another system promising improvement. They were also navigating tensions within their professional identity: balancing relational, judgment-driven care with growing expectations for digitisation and remote work. Even well-intentioned innovation risked being perceived as an additional burden rather than support.

Meanwhile, the internal design team operated amid misaligned change timelines and complex accountability structures. Tasked with developing a new centralised triage space called the ‘ROC’ (Remote Oncology Centre), they navigated union considerations, operational risks of relocating triage offsite and the need to make decisions with ‘just enough’ research rather than perfect certainty.

Case study: When service design meets socio-technical theory

In order to understand how to AI could be adopted into nurse triage, the team spent two months embedded in situ, observing nurses across shifts and cancer types. In addition to the well understood components of triage such as calls, handoffs and coordination, the team documented components of care work that are often invisible such as how nurse experience shaped clinical judgment, the emotional labour of managing patients in crisis and the importance of informal peer support.

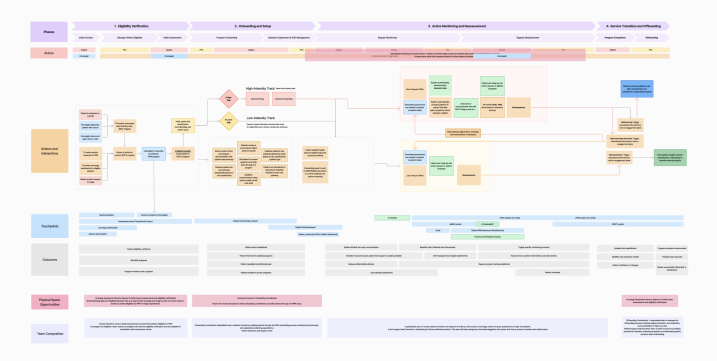

Analysis began with core service design tools. Service maps identified activities, handoffs and outcomes, clarifying how triage functioned across channels and roles. In parallel, interviews with the working group and nurses explored where AI augmentation might support existing practice. This dual lens – mapping current workflows while exploring augmentation opportunities – surfaced early possibilities for intervention.

From the outset, however, the team anticipated that mapping alone would be insufficient to guide responsible AI adoption. Because the goal was not merely optimisation but informed negotiation of trade-offs, the team intentionally complemented service design tools with a socio-technical perspective.

Service design methodologies are well suited to uncovering the complex and often invisible dimensions of services. Blueprinting and system mapping reveal relationships across time, touchpoints and stakeholders, helping teams orchestrate work across silos. Yet in moments of technological transition – particularly with AI – services cannot be treated as stable systems to be optimised. AI reshapes professional knowledge, redistributes accountability and redefines what counts as legitimate work. Questions of discretion, authority, collaboration and identity move to the foreground.

To account for these deeper shifts, we integrated a socio-technical lens into the analysis from the beginning. Socio-technical perspectives treat services as dynamic assemblages in which tools, roles, norms and institutions co-constitute one another. Rather than viewing AI as an add-on, this perspective stresses how introducing new tools transforms relationships between people, tasks and organisational structures. For service designers, this reframes the challenge: the task is not only to optimise touchpoints, but to understand how automation changes the meaning of work, reshapes collaboration and potentially erodes elements practitioners consider core to care.

Analysis was conducted in two ways. Service maps identified activities and outcomes (see Figure 1). At the same time, the team considered where AI augmentation could be added to triage based on interviews with the working group and nurse observation.

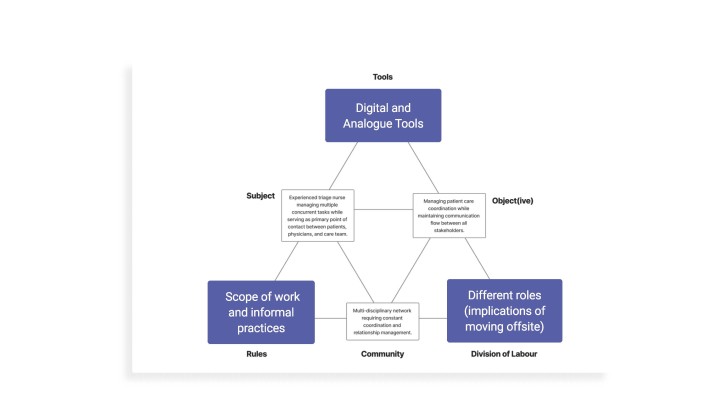

To operationalise this perspective, the team drew on ‘activity theory’, as developed for design contexts by Bonnie Nardi and Victor Kaptelinin (Kaptelinin & Nardi, 2006). Using a simplified ‘activity triangle’ (see Figure 2), we focused analysis on three interdependent elements: tools, division of labour and scope of work. This allowed us to explore the dynamic tension between automating tasks with AI, redistributing labour by relocating triage nurses offsite, separating them from oncologists and administrators and removing or redefining aspects of nursing practice.

Rather than asking where AI could ‘fit’, we used multiple activity theory triangles to visualise tensions and simulate consequences – examining how specific configurations might shift cognitive load, relational trust and service outcomes. Through this combined approach, AI was not treated as a neutral touchpoint to be inserted into an existing service. Instead, it was examined as an active agent that mediates workflows, redistributes responsibility and redefines professional practice. The activity triangle provided a structured way to anticipate these shifts, enabling more responsible and context-sensitive design interventions grounded in the realities of clinical work.

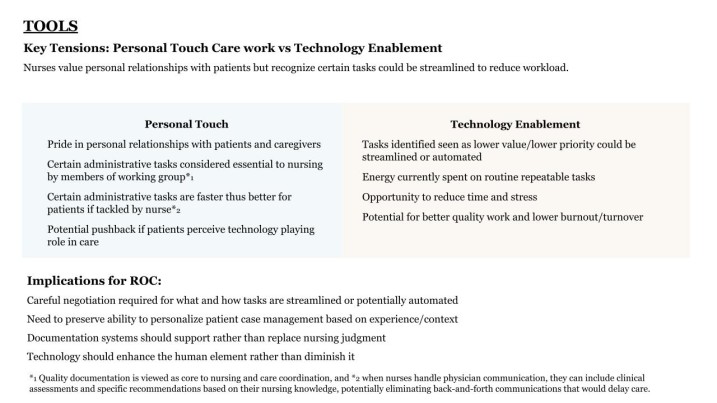

A key outcome of combining service design with activity theory was the ability to surface structured trade-offs and use them to guide the cross-functional working group. Research findings highlighted trade-offs and pointed to implications for triage at PMCH and the ROC (see Figure 3).

The most valuable insights emerged at points where proposed substitutions, such as automating a task or narrowing a role, carried broader consequences. When automation touched clinical discretion, or when role filtering reduced the scope of practice, the effects extended well beyond efficiency gains. Identifying these fault lines shifted the conversation from whether to adopt a feature to how that feature would reshape the system as a whole.

Two trade-offs proved especially generative:

- Relational care vs. technology enablement: Automation could reduce repetitive administrative work, but it also risked flattening nuanced clinical assessments and diminishing relational aspects of care.

- Flexibility vs. defined scope: AI systems could help redirect and redistribute tasks, but rigid guardrails risked constraining professional judgment in complex or ambiguous cases.

By highlighting these trade-offs, AI adoption became less about technical deployment and more about negotiating autonomy, workload, authority and patient experience. Making these tensions visible by framing research around trade-offs and implications created shared ownership among stakeholders and established a foundation for informed co-design, channelling disagreement into concrete, real-world considerations rather than abstract resistance.

Making trade-offs tangible: Storyboards and prototypes

Recent data suggests that many employees experience little time savings from AI in their daily work and often feel overwhelmed by expectations to incorporate it. At the same time, senior leaders frequently anticipate significant efficiency gains. This gap between executive optimism and frontline realities creates risk: when the consequences of AI remain abstract, resistance often surfaces only during implementation.

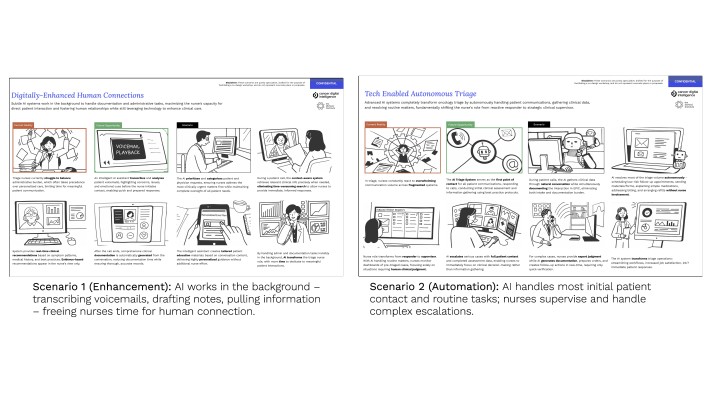

To expose consequences within triage, we translated identified trade-offs into tangible, experiential artefacts. Rather than debating AI in principle, we developed stratified ‘day-in-the-life’ storyboards (see Figure 4) that contrasted alternative futures. These scenarios made the implications of automation, augmentation, discretion and scope visible before rollout, allowing stakeholders to engage with consequences rather than assumptions.

Two sets of storyboards were particularly useful:

Triage AI toolset

- Enhancement: AI works in the background – transcribing voicemails, drafting notes, pulling data – freeing time for patient interaction.

- Automation: AI handles initial contact and routine tasks; nurses supervise escalations.

- Scope of the new triage role

- Flexible: Nurses exercise discretion, supported by intelligent tools.

- Guardrails: Automated filtering enforces strict role boundaries, protecting time for core work.

As prototypes increased in fidelity, one principle guided the work: AI could not simply be layered onto existing practice. Instead, it had to be integrated as part of a reconfigured socio-technical system that reshaped roles, workflows and relationships.

Earlier work surfaced core trade-offs – efficiency and relational care, discretion and standardisation, flexibility and protection. Rather than resolving them abstractly, the team embedded them directly into design decisions and involved stakeholders in this process. Each feature was evaluated not only for functionality, but for how it redistributed cognitive load, responsibility and clinical authority.

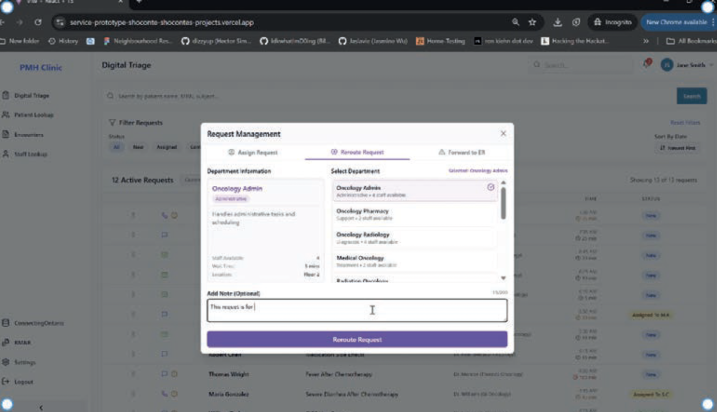

‘Smart Documentation’ unified triage requests across phone, email and internal channels, pairing transcripts with AI-assisted summaries and relevant electronic medical record (EMR) data. This reduced cognitive fragmentation and freed nurses to focus on clinical reasoning and patient interactions.

‘AI Recommendations’ surfaced relevant patient information, suggested next steps with rationale, transcribed conversations in real time and attached pre-approved resources. Crucially, nurses retained authority to accept, modify or ignore suggestions – preserving professional judgment while reducing repetitive effort. Documentation was streamlined while still allowing nurses to see relevant details.

Role boundaries were clarified: routine tasks could be streamlined or redirected, preventing triage from functioning as a catch-all, while maintaining flexibility for complex cases (see Figure 5). When creating layouts and renderings of the physical space design, the ROC incorporated collaboration zones and communication touchpoints to protect peer support and relational cohesion.

Prototypes translated abstract trade-offs into concrete, observable consequences. Working group and nurse co-design sessions used these scenarios to explore practical implications, refine decisions and internalise the dynamic tensions among roles, tools and nurse’s changing scope of practice. Conflict was not eliminated but became structured, actionable and productive. Stakeholder engagement shifted to deeper and more context-rich discussions about where work could be automated or reallocated to increase the quality of nurses’ work while maintaining relational care that moves beyond just treating physical symptoms to understanding the patient's context in order to improve health outcomes and emotional well-being.

A framework for designing AI transitions in practice

The case illustrates a broader lesson: when AI is introduced into complex services, we must take a more socio-technical approach. Traditional, product-focused design methods emphasise user needs and organisational goals. They cannot effectively transition services due to their inability to navigate interconnected social and technological systems. Yet many teams still lack a structured way to navigate the socio-technical trade-offs involved.

To make this work usable by other practitioners, we synthesised our approach into a four-stage framework that can be applied to other AI-enabled service transformations. The framework is not linear but iterative. Its purpose is to help teams deliberately surface tensions, prototype consequences and design across the full care ecology rather than at the interface layer alone.

A design diplomacy framework

1. Frame the core trade-offs

In AI initiatives, stakeholders often speak past one another, for example:

- Leadership emphasises efficiency and capacity

- Clinicians emphasise autonomy and relational care

- Operational teams emphasise risk and workload

When trade-offs remain implicit, debates stall or become political. Present your research in a way that emphasises critical trade-offs (see Figure 1).

Instead of starting with requirements, begin by articulating the competing values at stake, for example:

- Efficiency vs. relational care

- Standardisation vs. discretion

- Capacity vs. workload intensification

- Automation vs. skill preservation

- Role protection vs. flexibility

- For each proposed intervention, ask:

- What becomes easier?

- What becomes constrained?

- Who gains efficiency?

- Who absorbs risk or cognitive load?

- What dimension of care becomes less visible?

Rationale

In our case, explicitly framing trade-offs transformed meetings. Stakeholders could see competing priorities side-by-side. Complexity became the substance of discussion rather than something to avoid. This broadened participation by creating counterpoints to dominant participants, reduced siloed interpretation and shortened conflict cycles by anchoring debate in consequences rather than positions.

2. Model tools, roles and scope of practice

Once trade-offs are articulated, analyse how AI redistributes work in concrete terms.

Begin with a simplified view of the activity system, focusing on the dynamic interplay between three interdependent elements:

- Tools – What the AI system automates, augments, filters or recommends.

- Division of labour – How tasks and responsibilities shift across actors and teams.

- Scope of practice – How professional authority, discretion and identity expand, narrow or transform.

Rather than examining these elements in isolation, interrogate their tensions using the activity theory model (see Figure 2).

Ask:

- When this tool is introduced, which tasks move, disappear or intensify – and for whom?

- Where does discretion become constrained by guardrails, and where does it expand through new visibility or data?

- How does redistributing labour affect relational continuity with patients and peers?

- If scope is narrowed to increase efficiency, what aspects of relational or judgment-based care are at risk of erosion?

- Does augmentation meaningfully reduce workload, or does it shift cognitive and co-ordination effort elsewhere?

- What new dependencies are created between clinicians and the system, and what happens when the system fails or is bypassed?

These questions emphasise how tools, division of labour and scope of practice co-evolve. A change in one inevitably reshapes the others.

Rationale

In our project, early disagreements were driven less by interface details than by uncertainty about how professional roles would shift. By concentrating analysis on the interaction between tools, labour distribution and scope, we made redistribution of authority and workload visible. This reduced diffuse anxiety and enabled nurses and leadership to evaluate impacts on efficiency, autonomy, cognitive burden and relational care with greater precision.

As stakeholders began to see how specific features translated into structural consequences, AI literacy increased. Discussions moved beyond abstract enthusiasm or resistance toward informed negotiation of trade-offs embedded in daily work.

3. Stratify prototypes to amplify trade-offs

Trade-offs become real only when they are experienced.

Instead of converging on a single solution, deliberately create contrasting ‘day-in-the-life’ scenarios that exaggerate alternative futures (see Figure 4), for example:

- Augmentation vs. automation

- Flexible discretion vs. enforced guardrails

- Broad vs. tightly focussed scope of practice

Make visible how each affects, for example:

- Autonomy

- Relational care

- Cognitive load

- Accountability

- Peer collaboration

Rationale

In our case, stratified storyboards reduced the intensity and duration of conflict. Rather than debating hypotheticals, stakeholders reacted to concrete depictions of daily work. Nurses could anticipate impacts on professional identity, while leadership could see efficiency gains alongside potential erosion of care quality. Disagreement became specific and actionable rather than being manifest as generalised resistance.

4. Iterate transparently across the service ecology

AI does not only change tasks – it reshapes service ecosystems. Synthesize feedback on storyboard trade-offs and increase fidelity of AI prototypes. As fidelity increases, include documentation and communicate your rationale on how AI impacts:

- Technology

- Workflow

- Scope of practice

- Governance

- Physical space

- Metrics of success (worker satisfaction, attrition, patient volume, etc.)

During each iteration, explicitly document:

- What trade-off is being accepted?

- Why it is acceptable?

- Who benefits?

- Who carries an additional burden?

- What relational elements are preserved or reduced?

Rationale

Transparent iteration led to clarity and cohesion. Nurses reported feeling recognised and included. Leadership gained a clearer understanding of how efficiency, autonomy and patient experience interact. Over time, the working team developed the capacity to evaluate AI proposals deliberately rather than reactively. The path to adoption improved not because conflict disappeared, but because it was explored in a structured and generative way.

AI service ecosystem design: Negotiating trade-offs

Applied to the ROC, this framework operationalised AI as part of a redesigned service ecology rather than as a layer added onto existing practice. Tools supported judgment instead of replacing it. Division of labour was clarified without over-constraining flexibility. Scope of practice was intentionally protected where discretion mattered most.

The outcome was not frictionless alignment, but informed compromise. Stakeholders internalised trade-offs, conflict cycles shortened, stakes were lowered by using prototypes and the organisation built capacity to design future AI initiatives with greater confidence and literacy.

Designing with trade-offs

Applying the framework transformed both the process and the outcomes of AI adoption.

Revealing systemic trade-offs By surfacing competing priorities explicitly, discussions shifted from positional debates to grounded evaluation of consequences. Complexity became actionable rather than abstract, broadening participation and shortening conflict cycles.

Prototyping consequences Stratified storyboards made trade-offs tangible. Stakeholders could anticipate impacts on autonomy, workload, relational care and efficiency, enabling informed decision-making. Disagreement became structured, concrete and productive.

Building capability Iteration and transparency strengthened team cohesion and AI literacy. Nurses felt recognised and empowered, leadership gained clarity on efficiency vs. discretion trade-offs and the organisation was benefitted from a method for evaluating AI interventions.

Conclusion for practitioners

Success in AI-enabled services depends less on technical performance than on how well teams can identify, visualise and negotiate trade-offs. By framing conflict, modeling socio-technical dynamics, stratifying prototypes and iterating transparently, designers can integrate AI in ways that preserve relational work, protect professional discretion and support sustainable adoption.

Special thanks to iSchool graduate students Kshitij Anand, Sho Conte, Ethan Rong, and to Mike Lovas and CDI along with the many nurses and working group team members who made this work possible.

This article was published in Touchpoint Vol. 17 No. 1. You may find the full issue here: https://www.service-design-network.org/touchpoint/from-ai-to-synthetic-services

Share your thoughts

0 RepliesPlease login to comment